- Vitalik is very much confident of the fact that this chain would provide fraud-proof-free data.

- Buterin proposes a model, which suggests the idea of shared blockchain without communities, but availability checks.

- According to Vitalik, this plan of his would allow not only the scaling of data but also allow faster computations.

The Ethereum Research forum, a civilized and standardized discussion platform for Ethereum related discusstion, recently witnessed the creator of Ethereum, Vitalik Buterin. He has mentioned the scalable data chains without committees in the forum in his recent post.

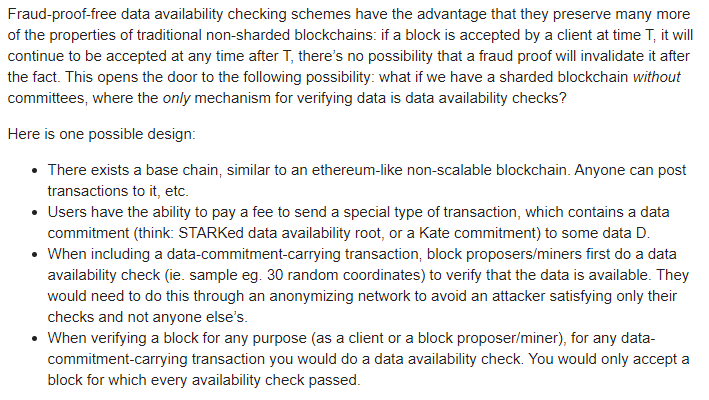

Vitalik is very much confident of the fact that this chain would provide fraud-proof-free data and would work wonders when compared to the traditional non-shared blockchains. On the 6th of January, after this discussion on the platform, Buterin also presented an outline of the scalable data chains.

He also shows his trust in the method as he mentions that the chains preserve more of the data in a much safer way. In the design, which he says, the single base chain would allow the users to pay by sending a unique transaction that enables users to know about the availability of the data.

Buterin proposes a model, which suggests the idea of shared blockchain without communities, but availability checks. For explaining the model, he gives an example of the Ethereum-like nonscalable blockchain, where anyone can post transactions.

These were some of the lines which Buterin mentions in the same context:

The block proposers or miners could check the data availability for the transactions. The Co-founder said that this method would increase the security in the era of crimes, and the technology would also prevent an attacker from invading anyone else’s checks.

The agreement of the chain methods would allow us to build layers on top of it as the blocks which would have passed the availability checks, would provide a base layer.

According to Vitalik, this plan of his would allow not only the scaling of data but also allow faster computations. The “layer two relying on synchrony assumptions” can be replaced by this technique and would also provide stability in having ~128 randomly selected validators, which stores and downloads the data.

Steve Anderson is an Australian crypto enthusiast. He is a specialist in management and trading for over 5 years. Steve has worked as a crypto trader, he loves learning about decentralisation, understanding the true potential of the blockchain.

Home

Home News

News